Machine Learning For Engineers: Reading List 1

Updated! Please see Machine Learning for Engineers: Book List for a more recent set of recommendations.

This is the first of a set of reading and book lists written from the point of view of a software engineer who wants to develop a basic knowledge of machine learning fundamentals. As an engineer, you are going to be working with libraries and frameworks for the most part, and ideally there’s a grasp of the ideas underlying the technology as well. In this part we'll look some introductory books that are 🤗, and first, some background books for machine learning mathematics 😬.

Companion Mathematics

Work can get done in applied machine learning without mathematics. It is useful though for developing an intuition of what these systems are doing, helps with working through the introductory books, and is needed for the more advanced books. For what it's worth, when it comes to applied machine learning, it never feels like time spent on foundational/math side is wasted time - personally I always wish I had a much stronger grasp. With that in mind, some good topics to know are:

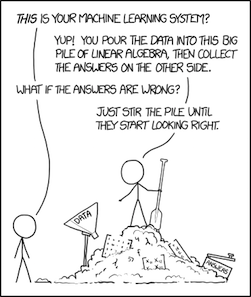

Linear algebra and matrix manipulation. Linear algebra is everywhere in machine learning and you’ll see it even in basic material. Algebra and matrix operations are useful to know in the roughly the same sense bitwise and binary operations are - a lot of real world machine (and especially deep) learning involves matrix swizzling. In practice libraries do the work for you, so you won't to need to code this stuff up.

Some statistics and regression. Like linear algebra, these topics appear early on in machine learning texts. Regression, analysing things to see if there’s a relationship between variables (eg, weather and ice cream sales) also appears in fields like economics and business optimisation/planning as a basic form of prediction (weather may indeed predict ice cream sales).

Probability. A handle on linear algebra. matrices and stats topics like regression is initially more useful, but probability becomes important since machine learning is so often dealing with real world uncertainty, and machine learning results are often expressed as likelihoods. The Deep Learning book for example goes straight to probability after linear algebra in its concepts overview chapter.

Calculus. The amount of calculus worth knowing initially is small, just enough to get a handle on things like gradient descent (which uses differentiation to control the rate a model “learns” at) or backpropagation in neural networks. Again, libraries do this for you (in Keras you’re going to plug in a number for the learning rate and go get some coffee) but it’s nice to have an intuition for what’s going on.

Some useful books:

Linear Algebra: Step by Step by Kuldeep Singh. There are a lot of linear algebra books, and this one I really like as a companion text and a self-study catchup, especially if it’s been a while since you’ve “done math”. It levels up chapter by chapter very well and is a really clearly written text book. As an added bonus it formats well on Kindle.

Introduction to Linear Algebra by Gilbert Strang. You’ll see this widely recommended as a standard text, and has a good focus on applications (there are other highly regarded texts which are more focused on linear algebra as its own area of study). The lecture series is online and is pretty great.

Coding the Matrix: Linear Algebra through Applications to Computer Science by Philip Klein. Stands firmly on the application side and best used as a complement to one of the ones above rather than a standalone text. If you’re already comfortable with linear algebra and/or Python this and website is a good way to develop an applied view.

Probability: For the Enthusiastic Beginner by David Morin is a general, and extremely well written overview of probability. It's a real gem and highly accessible.

Bayes' Rule: A Tutorial Introduction to Bayesian Analysis by James Stone. This is a good crisp introduction that focuses on Bayesian probaility.

Calculus: An Intuitive and Physical Approach by Morris Kline, or the The Calculus Lifesaver by Adrian Banner would be enough to get going. Banner also has lectures online. There are other more standard texts (Thomas, Stewart), though I’d argue you don’t need them initially. If you're feeling retro, there's Calculus Made Easy from 1910, which is a really quite good and considerate introduction.

Check out electronic editions before you buy them! Mathematics books, like programming books, don't always convert well.

Introductory Machine Learning Books

Bandit Algorithms for Website Optimization by John Myles White. This isn't a machine learning book, but acts as a good introduction to reinforcement learning. Via multi-armed bandits it presents non-mathematical overviews of approaches like epilison-greedy, softmax and upper confidence bounds. After reading this, you’re ready to look at reinforcement learning proper, a very interesting area with lots of potential; probably worth knowing for the future.

Data Smart: Using Data Science to Transform Information into Insight by John Foreman. Under the rubric of data science it covers k-means, naive bayes, nearest neighbours, linear regression among others. Incredibly it does all this just using spreadsheets, making it a highly accessible and grounded book. Also recommended as a gateway book to ML for managers.

An Introduction to Statistical Learning: with Applications in R by Gareth James, Trevor Hastie, Robert Tibshirani, Daniela Witten. This is a great introduction to learning techniques and approaches to selecting and evaluating them. The book self-describes itself a less formal and more accessible version of Elementary Statistical Learning, which we'll look at in part 2. For a working engineer, a language other than R for the examples would have been ideal. R is a choice reflecting the book's origins (statistics rather than software engineering) but the examples are well done, and there's more on their website.

Artificial Intelligence: A Modern Approach, by Stuart Russell and Peter Norvig. The AIMA book is a classic and as a survey of artificial intelligence techniques, is broader than the other books mentioned here - learning is just a section - the book also covers topics like search exploration, logic programming, agents and knowledge representation. The website has a bunch of complementary resources.

Conclusion

I hope this list was useful, and if nothing else, motivates you to select your own background reading! Please do look around for other options if these don't seem right for you! Some books click for people in ways others don't - for example I'm more or less math impaired, but Kuldeep Singh's book worked really for me once I found it. I've found Quora a great place for browsing suggestions.

In part two, we'll cover programming books for machine learning.